AI marking – Can it do the job?

That depends on what you mean by ‘do’, and which part of the marking process you’re referring to, says Anthony David…

- by Anthony David

- Executive headteacher, consultant, author and leadership coach

Walk around Bett, or any major edtech conference, and you’ll hear the same confidently delivered sales lines: AI will give you your evenings back. These promises usually centre on marking – upload a stack of essays, and you’ll instantly receive a set of grades, personalised feedback and even moderation support. Job done.

It’s an attractive idea. In secondary schools across England, marking remains one of the most time intensive and emotionally draining aspects of the job.

Extended responses, coursework drafts, exam practice, internal assessments, mocks. Even where whole class feedback has replaced written comments, the cognitive load involved with reading, evaluating and diagnosing misconceptions remains substantial.

So can an AI actually do your marking for you? For that matter, should it? The honest answer is more nuanced than the marketing might suggest…

Where AI can help with marking

AI performs strongest when you give it a structured task, explicit criteria and keep the stakes relatively low.

AI can, for example, generate first-pass feedback on written work. Given a clear rubric and a piece of student writing, most current systems can identify surface-level issues, such as missing structure, weak topic sentences, lack of evidence, repetitive vocabulary or unclear explanation.

For teachers who already know what they’re looking for, this can remove the ‘blank page’ problem – rather than starting your feedback from scratch every time, you’re instead refining and adjusting.

AI can also support rubric alignment. When assessment criteria are clearly defined and exemplified, AI can sort responses into likely bands and highlight where students have met or missed specific criteria.

This isn’t professional judgement; it’s pattern recognition against provided descriptors. When the rubric is strong, the outputs tend to be stronger.

A third, and perhaps especially interesting use of AI for English in schools is to support moderation. Some emerging providers, including AIR Education, are positioning their platforms as tools that will allow teachers to upload pupil work and receive assessment analysis aligned to frameworks, with the potential to enable quicker internal standardisation.

The appeal here isn’t just speed – it’s consistency. Instead of waiting weeks or months to co-ordinate moderation across departments or partner schools, teachers can receive earlier signals about whether judgements are drifting.

Stretching credulity

Visitors to Bett and other such events will have likely seen companies promoting products that promise instant feedback on handwritten GCSE responses, thus compressing the time between student submission and meaningful response.

This is a powerful idea in principle, since the shorter the feedback loop, the greater the potential impact on learning.

Such claims are not entirely empty – AI can indeed reduce certain kinds of workload – but they only tell half the story.

These claims start to stretch credulity when they bump up against the fact that AI struggles with assessment requiring deep subject expertise, contextual interpretation and professional judgement.

In subjects such as English literature, history, RE or politics, the quality of an answer doesn’t just come down to structure; it’s just as much about a student’s ability to deploy insight and nuance, and demonstrate conceptual understanding and disciplinary thinking.

A response can be technically sound, yet intellectually thin, or equally unconventional, yet perceptive. AI often encounters difficulties when making such distinctions.

Confident errors

Then there’s the issue of fairness. An AI can be consistent while still being wrong. If its training data or prompts reflect narrow norms, it could misinterpret writing from EAL students, neurodivergent learners or pupils whose expression doesn’t follow typical patterns.

Ofqual has been clear in its commentary on AI use in marking that technical capability, fairness and transparency all matter.

Even in regulated qualifications, the question isn’t simply whether AI can mark, but whether it can do so reliably and equitably.

Additionally, there’s the problem of confident errors. AI systems can produce highly plausible feedback that’s factually incorrect, or entirely misaligned with the task at hand.

In marking terms, this would amount to praising a misconception because it’s well expressed, inventing errors that aren’t present or overlooking key misunderstandings.

If teachers accept AI outputs uncritically, they risk increasing, rather than reducing their workloads through corrections and reworking.

Relief from repetition

When teachers say they ‘Want AI to do their marking’, they’re rarely asking to outsource their professional judgement. What they’re actually asking for is relief from repetition.

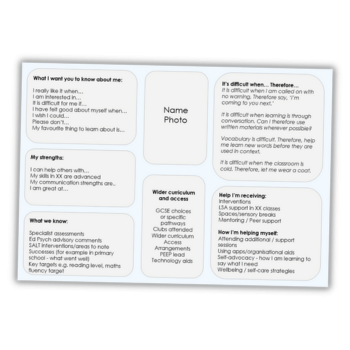

It could therefore help to break the process of marking down into the following three components:

- Evaluation – deciding what standard the work has reached against agreed criteria

- Explanation – articulating why that judgement has been made, with reference to evidence in the work

- Next steps – identifying precise actions the student should take to improve

AI can be most helpful with the ‘explanation’ and ‘next steps’ components – drafting comments, suggesting targets and highlighting patterns across a class.

It’s far weaker when asked to replace evaluation entirely, particularly within high stakes contexts.

The moment AI starts making final evaluative decisions, the stakes change. Marks aren’t just numbers; they influence self-perception, parental trust and institutional accountability.

Even in formative settings, grades carry social weight. For now, the safest model isn’t ‘AI as marker’, but ‘AI as assistant’. It suggests, you decide.

Professional risk

Before embracing AI-enabled marking, secondary leaders and teachers need to consider three practical risks, starting with data protection.

Uploading pupils’ work – particularly handwritten scripts or personal data – will require clarity around storage, processing and model training.

Claims of GDPR compliance are necessary, but not sufficient. Schools will require due diligence processes and clear AI policies about what can and can’t be shared.

Next, reliability. When an AI-generated judgement is challenged, it can’t attend a parent meeting, but the teacher can.

Consequently, every AI-supported decision must be reviewable and defensible. If you can’t explain how a grade was reached without referring to an opaque system, you’ll be on shaky ground.

Finally, there’s the matter of integrity. The Joint Council for Qualifications has issued guidance regarding the use of AI in assessments, emphasising the need to protect qualification integrity.

In a context where students may themselves be using AI to generate their work, schools must tread carefully when introducing AI into marking. Transparency and documentation matter.

A way forward with AI marking

If you want to explore AI marking responsibly, start small. Choose a low stakes assessment type and then define a tight rubric with clear exemplars.

Use AI for first-pass feedback and band suggestions, but retain human sign-off. Track disagreement rates between teacher judgements and AI outputs.

If the discrepancies seem high, refine the rubric or stop the pilot. Used in this way, AI can become a workload reduction tool rather than a decision maker.

Where instant moderation tools may prove particularly valuable is in internal standardisation. If departments can identify drift earlier, and focus human moderation time on borderline or contentious scripts, the efficiency gains could be real. Faster alignment will mean faster and more consistent feedback for students.

If the question is whether AI can replace the professional judgement of a secondary teacher in England, however, then the answer is no. Not safely, not ethically and not with the reliability required in high stakes systems.

If, on the other hand, the question is whether AI can reduce the time spent on drafting repetitive feedback, spotting common patterns and supporting internal moderation, then the answer is yes – albeit with clear guardrails.

The danger isn’t that AI will assume your role; the danger is that it will be adopted uncritically and trusted too much, or dismissed entirely.

For now, the most productive stance is professional curiosity. Treat AI marking tools just as you would a new TA. Train them with clear rubrics. Check their work regularly and use them to amplify your expertise, not replace it.

Your evenings might not return overnight, but when used wisely, AI could help you spend less time writing the same comment for the 20th time, and more time thinking about what your pupils actually need next.

Anthony David is an executive headteacher and author of the book Education With AI – an analysis of how AI can support education at all levels, what pedagogy will be impacted and why we should (and possibly should not) be adopting this technology.