What if Schools Don’t Make a Difference?

If we limit our judgement of education to that which can be measured, then we risk ‘mistaking the map for the land’, warns Gareth Sturdy…

How good is British education these days? Once upon a time we thought it was the envy of the world, but is it still so?

The question is troubling enough, but there’s a worse one lurking behind it: how do we know what quality in education looks like, anyway?

This last query has obsessed governments for a generation now.

The same conundrum, in a slightly different form, is also a preoccupation of parents, at least those with 10 year-old children: how do we judge which of the local secondary schools is the best one?

The search to provide an answer has thrown up a plethora of methods: national curriculum levels, SATs scores, GCSE and A level results, Ofsted judgements, local, regional and national school league tables, the ALPS system, the Ebacc, Progress 8, Attainment 8… on and on, all the way up to the PISA rankings devised by the OECD.

Each of these is a different method of assessing Educational Attainment (EA). They are the various ways of slicing the educational pie chart, and share one thing in common: numbers.

They all rest on the same philosophical underpinning, which is that where you have something as nebulous and intangible as quality in educational, numerical proxies can – and must – be brought to bear.

Kidding ourselves

Of course everybody knows that proxies are flawed and their use is an imperfect science.

Nevertheless, so the logic runs, EA indicators are necessary because there is no alternative. We must have some way of evaluating the performance of our schools, colleges and universities.

And that’s where I believe we run into trouble. A concept from physics known as the Measurement Problem can act as a useful analogy.

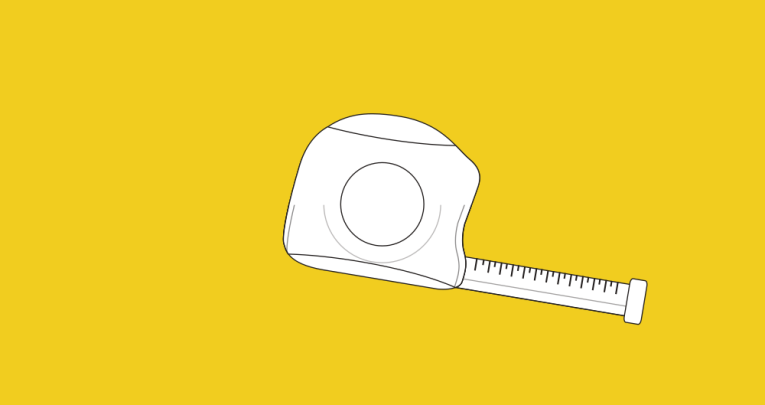

When trying to turn an indeterminate phenomenon into something you can measure, you cannot escape first having to make a subjective judgement.

Before answering the question ‘how long is a piece of string?’ you first have to decide what kind of ruler you’re going to use and where you’re going to put it.

All measurement is founded on subjective bases.

When it comes to simple physical qualities such as length or mass, the Measurement Problem can be dismissed by using international standards and protocols such as the Systeme Internationale, which keeps perfect metres and kilograms to which all others can be compared.

But where is the standard pupil, teacher or school to which all can be reliably compared?

EA is an attempt to kid ourselves that there is some standard, that schools can be scientifically measured, compared and thus improved.

This is folly, because we can never truly compare like with like.

And it is dangerous, because we end up in the predicament described by DH Lawrence, in which “the map appears more real to us than the land.”

EA indicators inevitability lead to improvement targets for individuals and organisations, which in turn lead to the fulfilment of Goodhart’s Law: when a measure becomes a target it ceases to become a good measure, because people instinctively distort their whole enterprise in order to fulfil the target.

Numerical educational attainment measures end up being mistaken for education itself.

As the former is easy to deal with, whereas the latter is elaborate and complex, the meaning of education becomes narrowed and reduced, and can disappear altogether.

All we’re left with is the attainment indicators.

Before measures

At a recent public discussion organised by the Academy of Ideas Education Forum, we discussed the latest incarnation of this process in the work of behavioural geneticist Robert Plomin, as revealed in his latest book Blueprint: How DNA Makes Us Who We Are.

The debate was titled after one of the chapters in the book, ‘schools matter but they don’t make a difference’.

Plomin’s point is that, statistically speaking, the differences between those who do and don’t do very well in education map more coherently onto the genetic differences between the two groups than any other kind of difference.

It looks like our genes come out on top as the most powerful drivers in educational development.

Plomin has, predictably, been widely criticised by educationalists for what they detect as the intrusion of determinism into education.

However, I believe Plomin’s provocative adage could be very useful to those of us who seek to make education less deterministic.

To say that schools matter but don’t make a difference is to prompt the question, ‘to what?’.

By talking of making a difference, Plomin falls into Lawrence’s trap of mistaking the map for the land.

He is referring to making a difference to EA scores. But this is not education. In fact, I think Plomin does understand this distinction, which is why he also says schools matter.

Education Forum member Alka Seghal-Cuthbert made a good point during our debate. We have had good schools for hundreds of years but EA indices for only a few decades.

What was it we were doing for all that time before the statistical measures came in? How did we know then that we were, or weren’t, educating well?

A dangerous truth

Plomin is no doubt right that our genes are as heavily influential in the classroom as in any other aspect of life.

Schools may not make a difference in any way that you can measure. All that really tells me is that measuring schools is mostly a fool’s errand.

We shouldn’t stop there, however. We should take Plomin’s work to its radical conclusion.

If schools don’t make a difference to EA scores, but yet still matter, then proxies don’t really matter.

It’s time for those of us teaching young people to proclaim a dangerous truth.

Nothing worth doing in education can be measured.

It’s a radical claim, though, and those brave enough to make it should expect challenge from the establishment at every turn.

It’s the very idea governments of every stripe are desperate to deny.

For removing their faith in numbers and proxies would take away their role. They’d be left with nothing to do.

In the absence of indicators, the political class would be forced to admit that it doesn’t really understand what it is that teachers do.

Ultimately it’s a cry for freedom, so shout it loud: nothing worth doing in education can be measured.

Join the conversation

The Academy of Ideas Education Forum gathers monthly to discuss trends in education policy, theory and practice. Find out more at academyofideas.org.uk/forums/education_forum.

Gareth Sturdy teaches English and mathematics in south London. He can be found on Twitter @stickyphysics.