AI in education – Expert tips for using it in your classroom

There’s lots of big promises surrounding AI, but in reality it’s already changing the way we teach. Get on board with this advice from fellow teachers…

- by Teachwire

Lots of educators are already using AI in education to save time and automate some of the duller aspects of the job. (Report writing, anyone?)

In fact, according to the survey tool Teacher Tapp, four in ten teachers are already using AI in their schoolwork. Educational technologist and former deputy headteacher, Mel Parker, predicts that with budget cuts and teacher shortages, AI in 2024 will become “an essential tool to support teachers in their day-to-day role”.

Here’s how you can use AI in your classroom to save time and improve your teaching…

- Time-saving AI teaching resources

- Example of teachers using AI in lessons

- Podcasts about AI in education

- What about online safety?

- What if ChatGPT actually knew about education policy?

- How to create a digital strategy that embraces generative AI

- What role could AI play in art and design lessons?

- Will AI really change how we teach?

- Are robots coming for teachers’ jobs?

- The AI homework problem

- How AI can save teachers time

- Could AI lead to exam-free education?

- Case study: Using AI tech to teach DT at Seven Kings School

Time-saving AI teaching resources

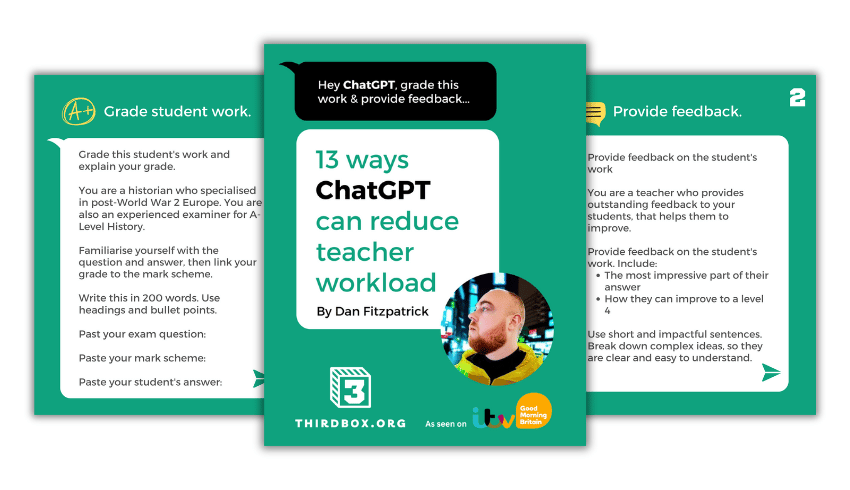

13 ways ChatGPT can reduce teacher workload

This free guide from Dan Fitzpatrick covers using ChatGPT to:

- Mark work

- Provide feedback

- Model answers

- Create a unit of work or lesson plan

- Create questions/lesson booklets

- Make a presentation

- Write reports and parents’ evening notes

- Create a curriculum intent document

Dan also offers a ChatGPT Survival Kit video course.

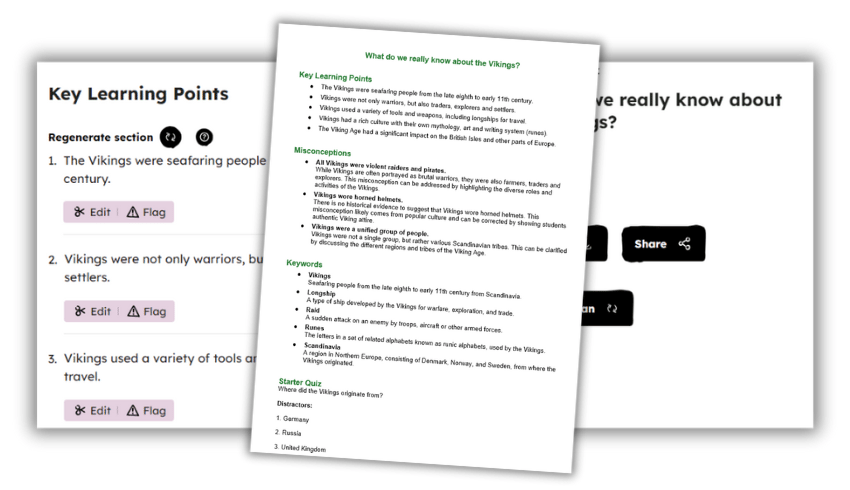

AI lesson plan generators

Picture this: no more late nights drowning in paperwork. Instead, just a trusty assistant crafting lesson plans at the speed of light. Say goodbye to monotony and hello to more coffee breaks with the new raft of AI lesson plan generators that are popping up around the internet.

One such example is Oak AI Experiments, which is supported by a £2 million investment from the government. This free tool allows you to:

- create and refine keywords

- share common misconceptions

- outline essential learning objectives

- build an introductory and exit quiz

Here we’ve asked it to generate a KS2 history lesson under the title, ‘What do we really know about the Vikings?’. Beware though, as Daisy Christodoulou points out, AI is prone to making factual errors. For this reason, it’s important to check the outputs they generate.

Why not give it a go yourself? Cheers to stress-free planning! You can also experiment with generating quizzes for your students. The AI-driven tool generates answers and distractors in a format that you can share or export.

Prime Minister Rishi Sunak commented on these AI tools, saying: “Oak National Academy’s work to harness AI to free up the workload for teachers is a perfect example of the revolutionary benefits this technology can bring.”

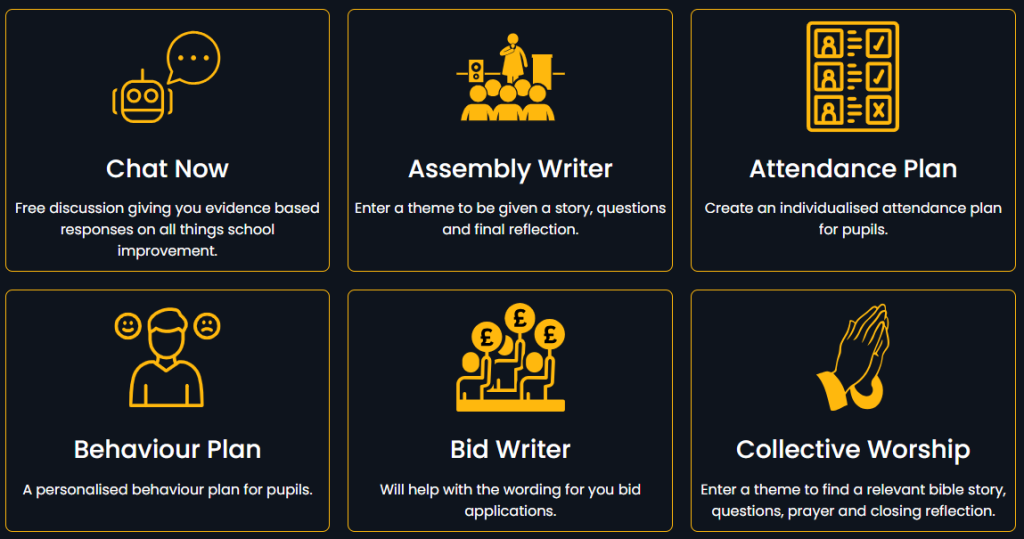

AI tools for school leaders

Former headteacher Craig McKee has created SLT AI to reduce the workload of senior leaders in schools. Unlike ChatGPT, this model has been trained on the latest information from the DfE, EEF and Ofsted. This means you should get an informed response based on the information it has been given. Some of the tools it offers include:

- Evidence-based responses on all things school improvement

- Personalised behaviour plans for pupils

- Bid writing applications

- Deep dive preparation

- Suggested points and wording for difficult conversations

- Help with interview questions and job adverts

Read more from founder Craig McKee below.

AI school report writers

Imagine if you could write all your school reports in a few hours, rather than the process dragging on for several weeks?

Tools such as TeachMateAI promise to help you do this. In fact, one primary teacher reported that the tool has reduced her report writing to “4-5 hours, maximum.”

This particular website, built by teaching and tech experts including Mr P, can also help you to:

- generate slideshows

- write comprehension and model texts

- generate maths word problems

- plan your lessons

Another website offering a similar service is Real Fast Reports. Here’s an example of how it transfers a set of brief bullet points into a report – meaning you can generate each one in just one to two minutes.

AI design tools from Canva

Canva, the online graphic design tool, has recently launched Classroom Magic, a suite of AI tools for the classroom. This includes Magic Write, which allows you to generate a first draft, reword complex content or summarise text.

Meanwhile, Magic Animate helps you to turn classroom materials into captivating videos and presentations in an instant. Magic Grab allows you to grab the text from, for example, a photo of a whiteboard or a paper-based resource, without having to rewrite it manually.

Magic Switch means you can reformat teaching materials instantly. So, for example, you can turn a presentation into a summary document or a whiteboard of ideas into a presentation.

Teacher’s prompt guide to ChatGPT

This Teacher’s Prompt Guide to ChatGPT will teach you how to effectively incorporate ChatGPT into your teaching practice and make the most of its capabilities. The guide covers generating prompts that will:

- Encourage students to think critically and solve problems

- Encourage students to think about their learning process and progress

- Help teachers improve their skills in using data effectively

- Produce open-ended questions that align with the learning intentions and success criteria of your unit of work

- Help you get to know students’ interests, strengths attitudes towards learning, and aspirations

- Analyse the effectiveness of different teaching strategies

Also check out this list of the best ChatGPT prompts for teachers.

More AI teaching resources

Free programme for KS3/4

Experience AI is a free programme from Google DeepMind and the Raspberry Pi Foundation that gives you everything you need to introduce the concepts of artificial intelligence and machine learning to your KS3/4 classes. It contains lesson plans, slide decks, worksheets and videos.

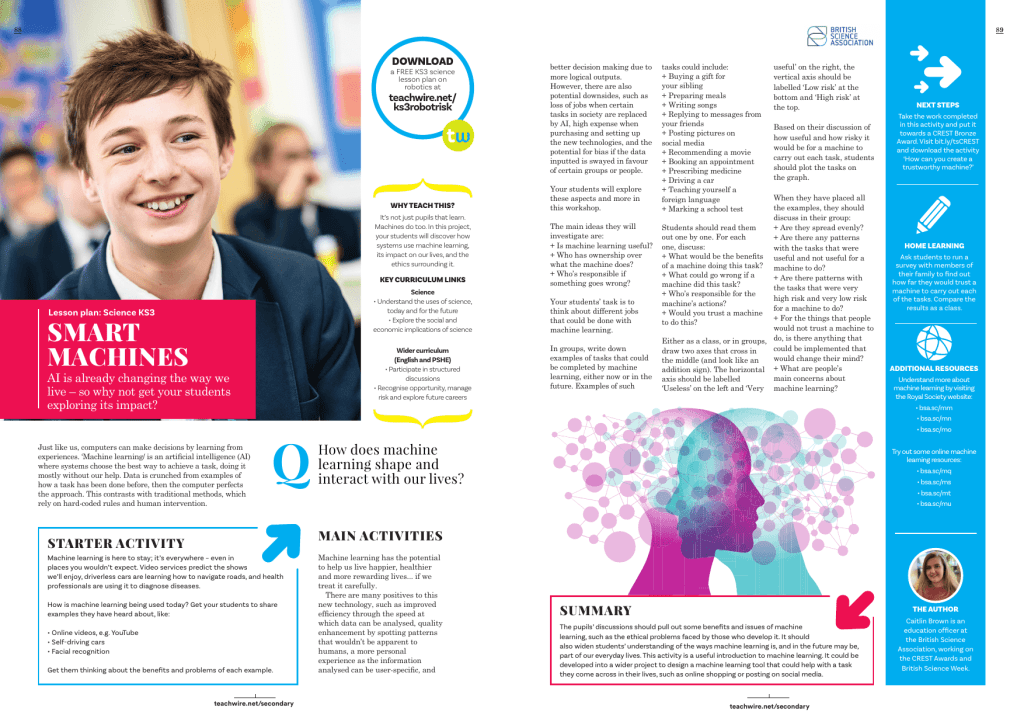

KS3 science AI lesson plan

AI is already changing the way we live. Get your students exploring its impact with this free KS3 science lesson plan by Caitlin Brown. Students will explore how systems use machine learning, its impact on our lives, and the ethics surrounding it.

CotranslatorAI

CotranslatorAI is a free Windows program for language teachers and learners that lets you communicate with OpenAI GPT models without using a web browser. It comes with a collection of pre-written prompts and template prompt tutorials to help you get started. You can input prompts in one language and get AI responses in another language.

Even more resources

- FORESIGHT – an AI-driven assessment tool by Performance Learning

- Maths-Whizz – a personalised learning and assessment tool that uses AI to provide live, ongoing formative assessments

- Lenovo NetFilter – a cloud-based, AI-driven web filtering and threat protection solution

Example of teachers using AI in lessons

Create word banks

Create differentiated statements and definitions

Generate reading resources for a geography enquiry

Scaffold and model independent writing

Generate a Y4 writing lesson plan

Podcasts about AI in education

In this episode of The Edtech Podcast, Nina Huntemann and Lord Jim Knight attempt to understand how best to cut through the white noise surrounding AI’s hype, misinformation, exaggeration and marketing, and determine just how positive for education AI can be if done responsibly.

Dive into the hot topic of AI in education by listening to a podcast with a data and AI specialist who works at Google Cloud. The podcast covers:

- the convenience technology and AI can bring to the sector

- the importance of creating digital learning opportunities for young people

- how to give teachers the confidence to use technology in the classroom

- how to focus on the positives of technology

- safeguarding issues

- the ethical and legal issues surrounding new technologies

More useful resources

- DfE policy paper – Generative artificial intelligence (AI) in education

- The AI Educator Sunday newsletter

- A list of AI educator tools from The AI Educator

What about online safety?

Tasha Gibson, online safety product manager at RM Technology, explains what a teacher’s role might be when it comes to pupils’ use of AI in education and beyond the school gates…

New technologies create opportunities for children to connect with each other, express themselves and learn. However, they also introduce new threats. These can impact the mental health of young people – from cyberbullying to online grooming and exposure to inappropriate content.

A new emergence in this field is generative AI. While praised as a technological leap forward, the introduction of generative AI tools such as ChatGPT and Google Bard to the mainstream presents yet another two-sided coin.

One side offers students the ability to have their own learning ‘assistant. This can help increase their understanding of topics taught inside the classroom and enable them to express their creativity in new ways.

The other side has seen teachers warn that pupils are making indecent images of other peers using AI image generators.

How to help children stay safe

What’s certain is that technology has become embedded into our education system. Therefore, its impact, both positive and negative, is felt by students of all ages.

We need to understand how to navigate technological change and help keep students safe online. Here are a few tips you can adopt to help protect online threats from impacting children’s mental health.

1. Holistic education and structure

While we need to teach students the fundamentals of new technologies it’s equally important we teach them the ‘rules’ around using it safely.

For example, making the use of new AI tools part of a structured routine can go a long way in helping students create boundaries with technology.

Dedicating sessions to installing an understanding of what to do when they feel uncomfortable around AI chatbots or AI image generators, as well as empowering students to identify threats and protect themselves by informing responsible adults, can have a significant impact.

This nurtures healthy relationships with technology, which can limit the negative impact it might have on a student’s mental health.

2. Intentional conversation

We must build regular time into our school days to have meaningful conversations about emerging technology, such as AI, in the classroom.

These conversations enable students to express how they feel about technology. You can also uncover deeper insight into how they use it. This can signpost you to any potential online activity that might impact a student’s mental health.

While you should create perimeters for the conversation, the onus of these sessions should be on the students to talk among themselves and share how they feel about different platforms.

Indeed, encouraging children to share their thoughts by asking open questions such as ‘What is your favourite website or AI tool?’ can foster an inclusive classroom environment.

It is only by creating an open and safe space to talk that we can understand the full use of these different tools in our classrooms. Then we can offer advice that speaks directly to students.

3. Gaining first-hand experience

By trying out the latest platforms, apps, and games for yourself, it becomes possible to spot potential risks and stay updated on new features which could impact the mental health of students.

Gaining a practical knowledge of AI-powered apps and platforms can also help you better relate to any new challenges students might experience online.

Due to budget cuts and a lack of resources, it can be difficult to join the national call for centralised technology training. However, you can speak to SLT to raise awareness of the need for upskilling and increase demand for digital skills training.

4. Supporting software

Finally, it’s important to acknowledge that the role of teachers today is vast, whilst time is limited. As such, ensuring your school’s digital infrastructure is designed to promote online safety is key.

Integrating web-filtering software that blocks potentially harmful websites or internet searches can support teachers in helping keep students safe online and protect their mental health.

Blocking social media via your school’s internet can also ensure they are focused on their education and not engaging in potentially dangerous online activity.

The relationship between technology and mental health is undeniably complex, especially within the context of schools today. There is no silver bullet solution.

However, by encouraging online safety and creating a safe space to discuss technology inside the classroom, it’s possible to help mitigate threats that could negatively impact students’ mental health.

What if ChatGPT actually knew about education policy?

The capacity for AI to take care of teachers’ admin has been hobbled by its unfamiliarity with the essentials. But that’s about to change, says Craig McKee…

Improbable as it may seem, the generative AI-driven chatbot ChatGPT reached 100,000,000 registered users barely two months after its initial launch in November 2022. Like many, I found myself intrigued by this new ‘do everything for you’ technology and wanted to find out more.

My first impressions were positive. This tool could write emails for me, help with school improvement planning targets – what’s not to love? The more I used it, however, the more I came to realise that its responses were good starting points, but that it lacked specific knowledge of education.

Ask it, ‘What is section 4 of the SEND Code of Practice?’ and you’d likely get a confidently expressed, but wholly inaccurate reply. The CoP was published in 2015, so one might assume that ChatGPT would be familiar with it, but apparently not.

This got me thinking. How much of a genuine game-changer could ChatGPT be if we knew for certain that it was drawing on correct information? If it had absorbed the latest DfE guidance, been given explicit information from Ofsted, or been primed with some of the EEF’s latest research reports?

Imagine that – a ChatGPT that could substantively answer questions put to it on operational matters relating to education…

Training ChatGTP

Enter SLT AI. This is an instance of the ChatGTP engine that’s been trained on 75 documents (and counting) from the DfE, Ofsted, the EEF, the Home Office and other entities. It has the specific aim of reducing workload for school leaders through the practical application of generative AI.

The main SLT AI interface currently features more than 50 distinct tools. There’s everything from focused school improvement, to SIP/SEF writing, deep dive preparation, curriculum progression, composing emails, writing newsletters, producing job adverts and more besides. New tools are being added all the time.

Time-saving tools

I’d have loved having access a suite of such tools back when I was a headteacher, as it would have allowed me to spend considerably more time with pupils and staff instead of being chained to my desk, buried in paperwork.

Rather than setting aside 30 minutes every Friday morning to write that week’s newsletter, it could have taken me five minutes. Hours spent writing my SEF/SIP would have taken barely one hour or less.

I was recently made aware via X/Twitter of a deputy trust CEO who had previously dedicated a whole day to supporting his headteachers with some Deep Dive prep. Using SLT AI’s Deep Dive tool, he was finished in an hour. Not ‘a whole day’ – one hour.

How about you? If you’d like to see how SLT AI could potentially save you hours each week, visit the site. We always welcome suggestions for new features – so together, let’s lead smarter, not harder…

Craig McKee is a former headteacher and the founder of SLT AI; for more information, visit sltai.co.uk or contact info@sltai.co.uk

How to create a digital strategy that embraces generative AI

Al Kingsley, multi academy trust chair and group CEO of NetSupport, discusses the importance of adapting your strategy to take AI into consideration…

Generative AI has undoubtedly seen meteoric growth recently. Conversations in the education sector regarding the potential threats and opportunities have increased in tandem with these technological developments.

Although exciting potential opportunities beckon, concerns are also being – rightfully – raised by educators who feel overwhelmed by the growing use of this new technology.

Already in use

However, a more pragmatic view is needed. The reality is that schools have been making the most of the capabilities of non-generative AI for years.

Personalised learning tools and pathways that support student learning are a great example of this. So are management information systems tools used by leadership and administrative teams to analyse and understand issues such as attendance and attainment.

AI has therefore already been in use (albeit to varying degrees) in schools across the country, cutting teacher workload, informing decisions made by leaders and helping students to learn.

Taking this view, there is less need to feel daunted by the growing use of generative AI. This technology, in one form or another, has been present in schools for a while – and similar approaches can be taken.

The pillars of digital strategies will remain largely unchanged in the face of generative AI. However, adaptations will of course be needed.

Five key considerations for integrating new technological resources

- Having clarity on the educational purpose, intended use and purported benefits of any tool is critical, whether or not it’s AI-based. The adoption of technology is not a race. School leaders should not feel pressure to begin using any software simply for the sake of appearing ‘cutting edge’. Like all technology, some solutions will build up credible evidence of their efficacy over time. Others will be rightly discounted. Regular re-evaluation to measure tangible impacts will ensure the tool your school selects is the right one.

- Data protection and security should remain a priority; a Data Protection Impact Assessment (DPIA) will identify any potential risks.

- Is the tool programme-agnostic? How will it integrate with pre-existing systems and solutions already in use? This will be crucial in evaluating the potential long-term usage and benefits of new resources.

- Implement a clear, comprehensive usage policy for generative AI resources. Transparency should be a key element of this policy. Make it clear where AI is being used and what for – for instance, acknowledging the role of AI in the creation of any resources used by teachers.

- Supporting staff with thorough initial training and CPD opportunities, so they can confidently and efficiently use these tools. Without current and regular CPD, any technological resources risk becoming burdens; particularly in the current landscape of rapid innovation.

Students using AI

In addition to the above, it will be essential for educators to understand how their students are already using generative AI.

Recent research from the charity Internet Matters found that 54 per cent of children use generative AI tools for their schoolwork and homework. Despite this, 60 per cent of schools have not spoken to their students regarding the use of AI in this manner.

Remaining up-to-date with these trends can be tricky. However, edtech vendors and education community forums often share invaluable insights in this regard.

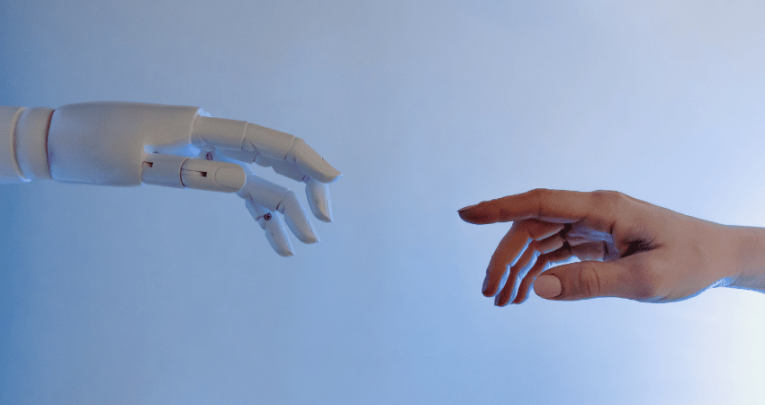

The advent of AI also reinforces the importance and value of the human-to-human dynamic in the learning journey.

The curriculum, and indeed our methods of teaching and assessment, will undoubtedly need to adapt in order to keep pace with the burgeoning AI era.

As AI continues to grow in capability, the balance in education is likely to shift further away from pure knowledge-building. It will move towards the impartment of relevant skills.

To keep pace with the integration of AI into many aspects of our every day, learners must be equipped with the ability to analyse and question the information in front of them, determining how to best utilise and apply knowledge.

Students’ ability to thrive in tomorrow’s world (and workforce) will depend on how educators can help their students in this regard.

What role could AI play in art and design lessons?

Hannah Day considers the role that AI could eventually play in art and design lessons – and the problems it’s causing in the here and now…

This term, as part of the graphics A Level, we looked at colour theory, for which students built colour palettes. One student, rather than selecting and refining his collections, sat back and let the computer do it. I asked him how. “Oh, I just built some code…” he responded, casually.

We then watched as his screen filled square after square with various tones. On the spot, I had to decide what I thought and how I should react to what I was witnessing.

Consider this

My issue with this student’s work was the overall lack of selection or refinement. He was merely accepting a result that some highly advanced software was giving him, rather than evaluating work he had produced by himself and changing it accordingly. In the event, the colour palettes ‘he’ produced were ultimately bland, and the work his code had generated garnered the poorest outcomes in the class.

Artificial intelligence, as applied to the field of art, currently works by taking all the information it can access, and using this to produce ‘new’ creations when prompted to by human input – but it’s a process that ensures the end results will only ever be average. In this case, the student’s colour palettes weren’t as good as they would have been had he simply done the work himself.

That said, a student of art will typically explore how different paints, brushes and marks can change a painting, If that student then uses different AI tools and prompts to create a range of outcomes, selects the best and uses them to further develop their own work – how is that process any different?

Referencing

But then there’s the matter of referencing. Any text that’s repurposed and used in an essay must be clearly referenced and cited. It therefore follows that any use of AI in image creation should be similarly highlighted and explained. What tool(s) were used in the work’s production? What prompt(s) did the student enter? How many outcomes were generated, and how did the student approach those outcomes?

If those elements of a work directly ascribable to the students’ actions, and those that are the direct result of AI technology could be separately and clearly recorded, that would at least leave open the possibility of assessing that all-important reflection and refinement.

‘Dead’ media

Finally, there are the implications for research. AI can easily generate false information – as neatly illustrated by a photograph I recently saw picturing Picasso, Basquiat, Dali and Warhol all sharing a drink together. I knew immediately that this was an AI-generated image, but students just starting out on their art history journey likely won’t know that these four artists never met together.

To prevent false information appearing in essays and being cited as genuine research, we’ll need to better educate our students in the skill of checking sources, and the importance of locating multiple examples of research to back up their assertions.

In contrast, there’s the humble, yet also mighty book. The thorough editing and fact-checking undertaken by publishers before releasing any title far outstrips the comparative ‘anything goes’ wild west of online publishing.

With the internet becoming ever more swamped with unreliable sources – which are now feeding AI tools that in turn produce yet more unreliable content – perhaps in time we’ll see a true resurgence of books and other printed matter.

Manipulating the paintbrush

So what does an actual artist make of all this? I wanted to talk to someone working across both the tech and art sectors and get their perspective – which is how I found myself in the studio of Dan Catt, who considers himself to be both an engineer and an artist.

Dan writes code, which he sends to a mechanical arm that he built himself. Said arm holds a fountain pen, the movements of which produce beautiful lined drawings.

This, however, is not an example of AI art, because Dan is actively telling the arm what do to. The process is functionally the same as what your own arm would do when manipulating a paintbrush. The only difference is the use of technology being placed front and centre.

In Dan’s view, we’re all trained to varying degrees in the history of art, just as an AI would be, since nothing or no one can work fully independently of the canon of imagery we have. The difference is the speed at which AI can absorb and assimilate this data, and the immediacy and range of its outputs.

He suggests that this can be seen by setting an AI a relatively simple task: “Take light, for example. AI can generate an image of each individual student’s home town, and explore light in the style of 10 different artists. Suddenly, you see the same place specified by the same prompt, but rendered through very different approaches, making comparisons and analysis much simpler.”

Never go ‘whole code’

Another of Dan’s observations concerns idea generation. If you have a student wanting to create a poster for a music festival, they could ask an AI to generate 100 different starting points while keeping the overall theme prompt consistent (such as ‘Glastonbury Festival’), but changing the suggested artist or influence (such as ‘Art Deco’, ‘Milton Glazer’, ‘Paula Scher’ and so on). These can then be utilised in much the same way as one might use a moodboard.

“As an engineer, I want efficiency,” Dan explains. “I therefore plot a whole page of code.” However, while he can predict whether his code will lead to straight, curved or jagged lines, he can’t focus as fully on the aesthetics until a later stage.

“Before AI, if you had an idea you also needed the skills to create that idea”

Only once the mechanical arm starts drawing can he see how the code is actually building on the page. “I almost always stop the artwork part way through,” he says. “I see it build, and know when it’s reached a point that, for whatever reason, works visually. If ever I let the arm draw the whole code, I’m always disappointed.”

Aesthetic value

More recently, Dan has introduced a new element into his code writing that he calls ‘Co Pilot’. The idea is for Co Pilot to make further suggestions based on code that’s already been written, thus speeding up his processes considerably. Dan is, however, careful to note that Co Pilot doesn’t have any understanding of aesthetic value – it’s still the artist who must decide on that.

I’m relieved to find that Dan’s views, while more practical and developed through his use of technology, coding and nascent AI, otherwise broadly align with mine. Reference, explore, rework; keep the artist as the final arbiter, and think of AI as a useful tool.

Tomorrow’s photography

Before I leave, he shows me some images produced by Midjourney – a generative AI programme and service that’s attracted attention for its sometimes sublime imagery – examples of which have been used as illustrations by a number of media outlets, including The Economist. Dan makes the point that artists and creators now have the option to develop their own artistic style using the same tool.

“The notion that AI won’t similarly become a specialism in its own right appears absurd”

“Before AI, if you had an idea you also needed the skills to create that idea. Now, using AI, you can create a visual output for that idea. Some people view those working in this way as artists, and some don’t.”

Specialism in its own right

His comment reminded me of the conversations that would have taken place during the early days of photography and still continue to this day. For many, the mechanical nature of the camera, and its ability to create images that can be reproduced infinitely, prevent them from considering photography as art – even some 200 years after the first photographic images were created.

Collectively, we seem to have a deep and perennial desire for the human and the unique. Yet AI is now very much with us, and will only become more sophisticated in its uses and applications over time. Just like the ‘ready-made’ and often conceptual art of photography, the notion that AI won’t similarly become a specialism in its own right appears absurd.

It seems to me that as teachers, we need to get on board and start helping our students understand how AI might influence, and maybe one day even enhance their artistic journeys.

Hannah Day is head of art, media and film at Ludlow College.

Will AI really change how we teach?

Why are so many voices trying to persuade us that AI in education is the biggest thing to ever happen to schools, and is it the truth? Former teacher and edtech expert Gareth Sturdy investigates…

Have you heard about the school that let its Y5 students design its curriculum? The kids wrote schemes of work and individual lesson plans for their teachers by comparing information across lots of websites, but without any deep knowledge of, or personal engagement with the subjects involved.

Okay, I’m fibbing. That didn’t happen. Y5s may well be capable of Googling and contrasting the results they find. However, it would be absurd to see this as a substitute for the knowledge, informed reasoning and practical skills wielded by teachers when designing a curriculum.

And yet, this is how the artificial intelligence (AI) behind chatbots such as ChatGPT works in practice – with a mentality similar to that of a ten-year-old blankly surfing the net.

So why are so many voices trying to persuade us that AI in education is the biggest thing to ever happen to schools, with the potential to fundamentally transform what they do?

Febrile climate

In April 2023, Prime Minister Rishi Sunak launched a £100m taskforce to exploit the use of AI across the UK. Education was high among said taskforce’s priorities.

At around the same time, however, some of the Silicon Valley moguls who have been instrumental in building AI platforms – including the likes of Elon Musk and Steve Wozniak – penned an open letter warning us all of the technology’s potentially calamitous impacts.

They even called for a temporary moratorium on further development.

The Oxford academic Toby Ord recently told The Spectator that around half of AI researchers harbour similar fears about human extinction stemming from the use of AI. Indeed, one of AI’s foremost pioneers, Geoffrey Hinton, went as far as quitting Google over his concerns regarding the existential risk that machine learning poses to humanity.

What are teachers to make of this febrile climate, in which we herald intelligent machines as both saviours of education and destroyers of civilisation?

The fearful response to AI in education seems of a piece with the apocalyptic mindset the media encourages us to adopt in response to all contemporary global challenges, be they viral, climate-related, military or economic.

Using AI in education

Yet spending just a few minutes toying with ChatGPT or Google Bard should be sufficient to persuade even the most sceptical of how ingenious these tools are, and the myriad potential applications they can be put to in education.

There can be little doubt that AI in education is going to improve the standard of learning resources. It’s also going to free up valuable teacher time. Just as with any disruptive technological development, jobs could be at risk. But on balance, the future will be better with AI.

“On balance, the future will be better with AI”

That said, let’s not get carried away. AI is, at least for now, really, really dumb. John Warner, English teacher and author of Why They Can’t Write?, has previously argued that we should be careful in how we talk about the data handling carried out by machine learning algorithms.

Change in consciousness

Warner makes the point that what we’re seeing from them isn’t genuine reading or writing. The so-called ‘Large Language Models’ that currently drive AI don’t actually know anything. They’ve yet to experience any change in consciousness through their learning.

Elsewhere, the musician Nick Cave has written that AI, “Can’t inhabit the true transcendent artistic experience. It has nothing to transcend! It feels such a mockery of what it is to be human.”

All AI can presently do is identify patterns it has seen before and copy them. There is no imagination at work. It’s not generating new ideas; only reworkings of what’s already been.

AI bots are mere plagiarists, a pastiche of intelligence. Or as the technology writer Andrew Orlowski memorably put it in The Telegraph, “ChatGPT – the parrot that has swallowed the internet and can burp it back up again.”

Simulated understanding

AI cannot impart meaning to anything. Meaning can only ever reside in a human mind. This is crucial for education, which is the creation of meaning by another name.

If platforms like ChatGPT have any use at all, it’s only because human beings have previously assigned meaning somewhere inside them.

The engineers currently fretting about how intelligent AI could become might be better off paying more attention to just how, well, artificial it still is.

Many people have hotly debated these issues over the years. This is ever since Alan Turing first proposed the ‘Turing Test’ in his 1950 paper, ‘Computing, Machines and Intelligence’.

To answer the question of whether machines could think, he hypothesised a game played between a person and an unseen machine. If the player can’t tell that their opponent isn’t human, the machine passes the test.

Strong vs weak AI

30 years later, a paper titled ‘Minds, Brains and Programs’ by the philosopher John Searle boiled the question down to focus on whether a machine could ever truly understand a language – a situation he called ‘Strong AI’ – or merely simulate understanding, which he dubbed ‘Weak AI’.

He concluded that machines of sufficient complexity could be devised to pass the Turing Test by manipulating symbols. This is just as the ELIZA project created by computer scientist and MIT professor Joseph Weizenbaum appeared to do, way back in 1966.

As Searle noted, the machine wouldn’t need to understand the symbols it was manipulating in order to provide an illusion of cognition sufficient to pass the Turing Test. Computers running software, on the other hand, wouldn’t be able to achieve Strong AI.

To truly come to terms with the role of AI in education, we need to invoke Searle’s distinction between the understanding and mere simulation of understanding needed to pass a test.

He suggested that the difference between them lies in intentionality. This is the human quality which always directs mental states towards a transcendent end.

Demoting the teachers

What end are we seeking when we educate? What is a student’s real intention when they learn? Do programs like ChatGPT produce knowledge, or a mere simulacrum of it, resulting from mindless rule-following? It’s the answers to these kinds of questions that will ultimately determine the use of AI in education.

Machines aren’t going to ‘take over’ our schools because they simply can’t. This is regardless of whatever spooky stories their creators like to frighten themselves with.

If kids are using ChatGPT to cheat on their assignments, that should just tell us that we’re setting the wrong sorts of tests. Education isn’t a Turing Test. AI is weak. Machines will always be dependent on humans for any supposed ‘learning’ they achieve.

“If kids are using ChatGPT to cheat on their assignments, that should just tell us that we’re setting the wrong sorts of tests”

Genuine risk

There is, however, one genuine risk. The more teachers come to rely on AI, the more likely it is that AI will reshape and define the meaning teachers give to their own role.

If we end up reducing education to a matter of machine learning, then it follows that teachers might begin to approach their vocation more mechanistically – almost as an automatic process of efficient information transfer perhaps best suited to a production line or call centre.

In such a milieu, the experience of becoming an educated mind, and the struggle and delight involved in that expansion of intellect, could start to seem increasingly irrelevant, rather than what they actually are: the point of the whole exercise.

The threat to education here isn’t posed by machine intelligence superseding that of teachers. It will come from teachers demoting themselves into becoming mere machines themselves. This will cheapen the ideal of learning and undervalue the meaning we assign to education itself.

Gareth Sturdy (@stickyphysics) is a former teacher now working in edtech.

Are robots coming for teachers’ jobs?

The potential for AI to transform education – for better or for worse – might seem exciting, says Harley Richardson – but let’s not get carried away…

There has been a lot of excited talk recently about the threat to jobs posed by automation, robots, and now artificial intelligence: machines that can think like humans.

We’re told that ever more complex tasks can now be automated and perhaps done better as a result. And tha we should all be preparing for a world in which we’re competing for work with computers.

Is teaching one of the jobs put at risk by the emergence of AI? Or does AI in education have potential to enhance life in the classroom?

An event organised by BESA, the industry body for education suppliers, provided plenty of food for thought about these questions.

Open-ended system

Sir Anthony Seldon, former Vice-Chancellor of the University of Buckingham and author of The Fourth Education Revolution: Will Artificial Intelligence liberate or infantilise humanity? kicked things off with a bracing polemic about the opportunities and dangers of AI.

He argued that AI can help us move away from the “factory model of education” towards a more open-ended system focused on creativity and problem solving. And he said we’re seeing early signs of what technology can bring us in innovations such as “no lecture hall” universities and courses offering “nanodegrees”.

Apparently, “we need AI machines to teach our students to become more fully human – the education system currently deploys humans to teach our young to become more like machines.”

But at the same time he worried that, if we’re not careful, AI could represent a “massive existential threat”, with the potential to strip vitality out of school and life in general. And it’s coming fast.

Apparently the “singularity” – the point at which the human and the machine merge – may take place as soon as 2040.

Limited capability

For me this is straying into fantasy, and is not justified by the reality of AI technology today. Thankfully, Seldon’s talk was balanced by a more down-to-earth panel discussion at the same event, organised by Claire Fox of Radio 4’s Moral Maze.

Science communicator Dr Kat Arney explained that she is “infuriated by the hype about AI”. She argued this often consists of stunts promoted uncritically by technology journalists with a poor understanding of how computers work.

Mohit Midha, CEO of the education website Manga High, pointed out that AI is very good at solving finite tasks and problems – such as winning a game of chess, driving a car or finding a brain tumour – but is quickly confounded when it encounters data outside its frame of reference.

Computers can only do what they’ve been programmed to do. While they may be excellent at identifying patterns at mind-boggling speed, they have no way of giving that data meaning.

Science fiction

As for the nightmare vision of machines that can replicate and improve themselves, eventually challenging us for domination of the world, that still lies firmly in the realm of science fiction.

But let’s assume, for the sake of argument, that the claims made by Seldon and others about AI are realistic. Should AI then have a role in our classrooms? Perhaps even as teacher?

Claire Fox wondered if there’s a danger that AI amounts to “copying humans without the humanity.” This reminded me of the 1951 short story by Isaac Asimov, The Fun They Had, which cautions that replacing teachers with computers would be a loss for adult-child relationships.

We should keep in mind that there are two things machines can never have: morality and imagination.

And there is something implicitly moral about a teacher standing in front of a class of children, sharing their knowledge about the world with members of the next generation. It’s about having the imagination to see a child’s potential beyond what they can do now.

Knowledge and power

Thankfully no one at the event wanted to see teachers being replaced by AI. But perhaps, it was suggested, AI can replace the ‘robotic’ elements of education. This raises the question: what are the robotic parts of education?

Midha argued that AI in education can enable “mass personalisation”. This frees up teachers’ time and allows them to move away from a knowledge-based curriculum. That way they can concentrate on developing higher-order skills.

Along these lines, Priya Lakhani and her team at Century Tech have been conducting some very interesting real-life experiments in AI-based adaptive learning. They claim their software both engages children and saves teachers considerable time.

This is worth keeping an eye on. But the hope that this type of personalisation will reduce our dependence on knowledge is really a variation on the old ‘Why learn any facts when you can just look them up on Google?’

Higher-order skills

The point of learning facts – and developing skills – is that once learned they become part of us. And the process of learning them is often a critical part of developing those higher-order skills.

In real life we are often ambivalent about the deskilling that can be associated with automation. Would we rather take an urgent taxi ride with a driver who has The Knowledge or with a driver who has sat-nav?

How do we feel about reports that surgeons are losing their dexterity because robots are performing operations?

Returning to education, then, what about handing over marking to machines? After all, “I’ve got too much marking to do” is one of teachers’ most common complaints.

As a repetitive and routine task, marking sounds like a great candidate for an AI takeover. But could anything be lost in the process?

Tom Sherrington, author of The Learning Rainforest: Great Teaching in Real Classrooms, has argued that a teacher’s own mark books can be a helpful reminder of how a child is actually doing – and that when those raw marks are divorced from the relationship between the teacher and the pupil and turned into spreadsheet fodder they can easily become misleading.

A little politics

These are just two examples, but I remain to be convinced that there are any straightforwardly robotic elements of teaching.

There’s no doubt a huge amount of mind-numbing non-teaching bureaucracy in education contributing to teachers feeling like robots.

Yet it’s worth noting that the amount of red tape has increased at the same time as computers have become prevalent in schools. If we want to do something about that, we may need to look beyond technology to the realm of politics.

Overly simplistic

I think the idea that schools are following a “factory model of education” is overly simplistic, and unhelpful at best when considering the potential – good and bad – of AI in education.

The chances are that the most useful applications of AI haven’t occurred to anyone yet. And it’s worth reflecting on the progress made by the non-AI technologies which are already in schools.

Interactive whiteboards may be ubiquitous. However, after decades of bold talk about the internet enabling personalised round-the-clock learning, with each child having control of their own education via mobile devices, the reality for most classrooms in the UK is still a teacher teaching and a room full of children learning.

And maybe that’s absolutely fine. Are we trying to solve a problem that doesn’t exist?

Harley Richardson is director of design & development at Discovery Education and a coordinator of the Academy of Ideas Education Forum, writing here in a personal capacity. The Academy of Ideas Forum gathers monthly to discuss trends in education policy, theory and practice.

The AI homework problem

Secondary teacher Ian Stacey reflects on the steps teachers can take to prevent students using chatbots to complete their class assignments for them…

In 2022, a technology quietly appeared with far-reaching implications – AI chatbots. For the uninitiated, these allow users to create pages of text from just a handful of prompt words.

As with previous innovations, teachers have been left to figure out what the impact of this technology is going to be for them and their students. I know that I’ve barely scratched the surface. However, here I share my initial thoughts so far and some possible solutions.

“Teachers have been left to figure out what the impact of this technology is going to be for them and their students”

Plagiarism

The most obvious issue is plagiarism. If little Johnny can now get ChatGPT to write his coursework for him, why would he want to write it himself? There’s also the related question of whether this counts as theft.

For now, at least, AI-generated writing is largely considered the creation of whoever enters the prompt words. This is regardless of whether they’ve written, edited or even read any of the generated text.

Needless to say, however, since the whole purpose of coursework is to prove understanding, I don’t want anyone, or indeed anything, writing it besides the students themselves.

The second issue is accuracy. AI chatbots aren’t above citing facts or statistics that are either provably false, or ones it’s spontaneously generated – i.e. made up.

Related to this is the issue of inappropriate content. You are what you eat. Since AI chatbots are fed by data and content harvested from the internet, this can easily lead to potential complications.

Take the case of ‘Nothing, Forever’, for example. This was a project that was set up with the fun aim of creating an AI-scripted sitcom. However, it had to be temporarily shut down after creating material deemed to be transphobic.

Humans versus machines

An initial solution proposed within our school was to try to block ChatGPT and similar sites using network-level security. But this isn’t a long-term answer.

Pupils can simply access to the same sites, services and software at home. Plus, a growing number of AI chatbots are joining ChatGPT. How could any school ever hope to track them all?

Another solution then presented itself in the form of ‘classifiers’. This is a new class of software which promises to help teachers, publishers, employers and other interested parties to distinguish between text produced by software and original material written by a human being.

They still have some way to go though. The current generation of classifier software only produces an accuracy score of around 26%.

Perhaps the real answer lies in steps that we can take during the drafting process. I’ve started asking my pupils to complete a first draft of their assignments composed purely in bullet points.

The advantage of this is that children are less likely to copy and paste text they don’t understand into this kind of format, where the substance of what their essay is actually saying is much more clear.

At a later date, some time after the point where what they’ve written is no longer so fresh in their minds, you can then task pupils with revising their initial drafts by turning them into properly structured essays with standard paragraphs.

Learning exercises

Whatever we might think of AI as a tool in its current form, the technology is here to stay. It will be playing an ever larger role in our schools, whether we like it or not. We can almost certainly expect the pupils of today to be routinely using it once they’ve entered the workplace.

Given that a significant part of a school’s role is to prepare pupils for the next stage of their life, we must teach them how to use it effectively.

I know enough to say that I’m not ready to do that yet myself. I would, however, maintain that introducing a process of ‘redrafting’ – where AI suggests changes and alterations students can make to their early drafts, so that they’re written more cohesively – could be one example where pupils can use AI to help them with a useful learning exercise.

Disadvantage gap

And yet, there’s one final problem I can foresee which neither I nor my colleagues have any kind of answer for yet. This is the creation of a huge gap between advantaged and disadvantaged pupils.

ChatGPT and its more well-known rival chatbots are, for the most part, free for anyone to access. As such, while they can certainly generate ‘unique’ text at impressive speed, the end result will often appear somewhat clunky in terms of sentence construction.

However, it’s now possible to access a number of subscription-based chatbots. These are apparently capable of generating text to a much higher standard. Could we be witnessing the birth of a new phenomenon, whereby more affluent pupils are able to ‘cheat’ more convincingly, and obtain improvements to grades that were already better than those of their peers to start with?

Ultimately, there are many possible directions in which AI chatbots and related technologies could take us, both good and bad. Those are some of my thoughts – I’m eager to hear what everybody else has to say…

Ideas for avoiding AI homework

Drafting

At the start of the writing process, give pupils a series of short prompts to research. Crucially, explain that students need to record the details they uncover in the form of bullet points, while keeping the sentences as simple as possible.

Moving on

At this stage, pupils must put aside the bullet points they’ve researched until they’re no longer easy to recall from memory.

Cohesive devices

Give pupils a refresher on how to use cohesive devices (which they should previously have covered in Y6). This includes sentence openers, conjunctions, prepositions and so forth.

Writing

The pupils then revisit their notes and employ cohesive devices to turn them into full length pieces of writing.

Ian Stacey is a product design and food teacher based at a comprehensive school in Essex.

How AI can save teachers time

AI isn’t much of a teacher. However, it can be a great administrative assistant, write Professor Geoff Baker and Craig Lomas…

“Forget robot teachers, adaptive intelligent tutors and smart essay marking software. These aren’t ‘the future’ of artificial intelligence in education, but merely a step along the way.”

– Rose Luckin, UCL professor and co-founder of the Institute for Ethical AI in Education

You don’t have to search far before stumbling across a blog or article offering tips and advice for overworked teachers lacking even the time to finish the now-cold beverages they thought would get them through the morning.

The emergence of artificial intelligence in recent years, however, has introduced a new element to these discussions. It’s one that might finally make good on that well-worn expression, ‘Work smarter, not harder’, and finally offer a way out of the time dilemma.

Changing the discussion

Research indicates that many professional development programmes are actually ineffective in supporting changes in teachers’ practices and student learning.

Multiple reviews conducted by the DfE (most recently in 2023) have found that one of the biggest barriers to professional progression cited by teachers continues to be their workload. Teachers simply lack the time needed to invest and properly engage in professional development.

It might not be the only such barrier. However, it remains the key reason as to why many teachers fail to invest in their practice in any meaningful way.

Endless possibilities

Yet AI may be about to change the direction of this discussion for good. The hype around AI, and its transformative potential within education is still hotly debated. However, it’s now become clear that existing AI technology can make a dramatic difference to teachers’ working lives.

Take ChatGPT – a large language model that can perform an inordinate number of tasks in a matter of minutes, when given appropriate prompts.

The use of ChatGPT for educational purposes, and the ethical considerations that entails, is perhaps a topic for another discussion. But one thing we can say for certain is that it can complete all manner of tasks that might otherwise take away teachers’ precious time. That’s everything from the creation of schemes of work and teaching resources, to essential data analysis, marking and feedback.

The potential applications of this software are almost endless. With suitable training on how to interact with it, and provide adequate detail in the prompts, ChatGPT can free up teacher time to be spent in ways that have far more impact.

So, if AI can give the gift of time, what else can it do?

Direct CPD

AI software can also be used to supplement and enhance professional development programmes in a more direct way. Besides workload, other professional development restrictions can include the often generic nature of the content used within CPD sessions, as well as the traditional ‘training model’ approach to whole school delivery. This was identified in 2005 by Aileen Kennedy of the University of Glasgow’s School of Education.

This typically sees all staff congregate in their school’s main hall or theatre. You’re then talked at for the next 60 minutes by an outside ‘expert’.

While some schools have evolved their practice in this regard, other haven’t. Some don’t even view such approaches as problematic.

Through AI software, again utilising ChatGPT, the first of these issues can be addressed. Generic content in professional development sessions, whilst unavoidable in some cases, is usually equivalent to delivering the same content in the same manner to all the students you teach, with little to no consideration for differing levels of ability or need. We don’t do it in our lessons, so why is it seen as appropriate for teacher training?

Variations in needs

Unfortunately, we don’t always practice what we preach. Approaches to professional development don’t always draw on and reflect our collective knowledge base around the fundamentals of learning.

Take, for instance, a session on questioning. It could be argued that this is a universal skill that every education professional ought to develop further, but at the same time, there are numerous complexities involved, different layers to questions and types of questioning.

A teacher’s prior experience, abilities, confidence around implementation and subject specialism will all factor into their specific training needs. Whilst we may all need to develop aspects of questioning, there will likely be variations in those needs.

Facilitation, not revolution

By utilising ChatGPT, we can create bespoke learning pathways in real time. Teachers can input their own existing experience levels, specific needs, and desired outcomes, whereupon ChatGPT can generate:

- tailored development plans or learning pathways that include a structured plan of action

- wider reading recommendations and specific strategies (often based on up-to-date research-based pedagogy)

- modelled examples

Admittedly, not all the resulting suggestions may be of practical use, but ChatGPT can at least consistently create that often elusive starting point for professional development.

It can prevent the dreaded temptation to procrastinate because you simply don’t know where to begin. It can provide a starting framework, within which you can then develop and start to evolve your professional development.

Beyond those valuable starting points and guiding frameworks for professional development, ChatGPT can also be used as a research tool, saving teachers precious time by summarising articles, chapters or even whole books prior to reading them, to help you determine their relevance (or otherwise) to your particular avenue of research.

Beyond the hype cycle

Moreover, ChatGPT can generate recommended reading lists based on specific areas of need, as well as help you develop initial hypotheses and enquiry questions, along with appropriate methodologies, to help begin the process.

Below, you’ll find five practical uses of ChatGPT, along with some suggested command prompts, which you can use to further explore the potential AI has to support teacher workload.

Ultimately, we know that it’s the quality of teaching that makes the biggest difference to children’s learning, and to school improvement as a whole – for which a sustained and carefully considered professional development plan is essential.

So, will AI revolutionise education, as the hype cycle seems to insist? We’re yet to find out for sure, but there’s perhaps another, perhaps more important question that we should be asking: can AI make teachers’ lives easier by assisting with key elements of teacher practice? And the answer to that question is yes. Absolutely.

Example ChatGPT command prompts to try

1. Lesson preparation and administration

You can create more time for yourself by enlisting the help of ChatGPT for tasks such as lesson plans, creating resources, marking work and analysing data.

Example prompt: Plan a 60 minute lesson on glaciation for a Year 7 mixed ability class

2. The generation of specific learning pathways

Counter the tendency towards ‘one size fits all’ teacher training by focusing your professional development on specific areas of need.

Example prompt: I am an ECT struggling with management of low-level behavioural issues. Can you suggest some strategies to move forward?

3. Streamlining research

Use ChatGPT to summarise articles before reading them, so that you can acquire better insights into whether or not they’re relevant to the field you’re researching.

Example prompt: Summarise Luckin, Rose (2020) ‘AI in education will help us understand how we think’

4. Helping you read more widely

ChatGPT can be used to recommend wider reading around a particular area of need, or just broad topics that you happen to be interested in.

Example prompt: Suggest key texts on AI in education

5. Critiquing your teaching

Another possible use for ChatGPT is to gauge the likely effectiveness of your lesson planning; you can then use its findings to help you better reflect on your practice.

Example prompt: I plan to do a group task with a mixed-ability Year 8 class. What might the limitations of this approach be?

Geoff Baker is a Professor of Education and Craig Lomas a Senior Lecturer in Education, both at the University of Bolton, and both former senior secondary school leaders.

Could AI lead to exam-free education?

With driverless cars on the road and robots assisting doctors in surgery, exam-free education needn’t be science fiction, explains Rose Luckin, chair of learning with Digital Technologies, UCL Institute of Education

Every year when SATs come around, we all have to read about the stress and anguish they are causing. And each year I sigh and ponder why we’re persevering with such a blunt tool to undertake the important task of assessing our children’s progress.

Until recently, you might reason that we haven’t had a credible alternative that can paint a reliable picture of the progress of every schoolchild in the country.

“Exam- and test-free education could be a reality, if we want it to be”

But as of now, the continuing development of reliable artificial intelligence technologies is providing an alternative that can enable us to move beyond these outdated testing systems.

Impact on assessment

But how exactly, you might ask, could this have an impact on assessment? Artificial intelligence can be defined as the ability of computers to behave in ways we think of as essentially human. This includes using speech recognition and decision-making to interact with the world.

The power of this technology is that it’s capable of processing huge amounts of data in order to carry out a function. This might be recognising a face or deciding on a chess move.

The use of artificial intelligence in our daily lives has increased exponentially, to the point where it has become almost ordinary.

We often use Google’s intelligent search, or have our faces recognised in the ePassport gates at airports. We’ve reached the stage where AI in education is more than capable of assessing our children’s learning. We’re also more than capable of designing and building the systems to do it.

Exam- and test-free education could be a reality, if we want it to be.

How would it work?

AI is a powerful tool that can open up the ‘black box’ of learning. It can provide a deep, fine-grained analysis of what pupils are doing as they learn. This means their learning can be ‘unpacked’ as it happens.

It can do all this discreetly in the background, processing information about learners’ activities rather than being an obvious and stressful presence for students and teachers who feel constantly under observation.

But utilising this technology for assessment depends upon various factors, in particular the subject in which it’s being used. For example, AI techniques such as natural language processing, speech recognition and semantic analysis would be required to measure speaking and listening skills or language learning. On the other hand, you might use a simulation game to appraise scientific understanding.

Common features

There are many possibilities, but there are also some common features that all AI assessment tools possess knowledge of:

- The subject or skill being learnt

- The details of the steps each child takes as they complete their learning activities

- What counts as success within each learning activity, and within each of the steps towards the completion of those activities

Some of this technology also builds up knowledge about children’s motivation, confidence and self-awareness.

AI techniques, such as computer modelling and machine learning, are applied to these three common knowledge areas. This enables the technology to make a judgement about every child’s progress.

This might assess the development of a child’s subject knowledge. However, it can also look at skills, such as collaboration or persistence, and characteristics such as confidence and motivation.

Open Learner Models

Once all of this processing and analysis has taken place, the results can be presented to teachers, parents and learners as visualisations that represent a child’s knowledge, skills and progress. This helps everyone understand each student’s performance and needs.

These visualisations are called Open Learner Models (OLMs), because they open up the processes that underpin a learner’s performance.

On top of this, the information collection and processing carried out by the assessment technology can take place over a specific period of time – certainly much longer than an SAT takes to complete. This gives a far-more rounded evaluation.

It could be conducted over a few weeks, a term, a year or longer. And as the process is carried out as lessons are happening, it doesn’t, therefore, require teaching and learning to stop in order for it to take place.

This is very much in sync with how day-to-day education is carried out. Teachers are continually involved in formative assessment as part of the teaching-and-learning process. Artificial intelligence can offer great assistance in making this process more efficient and traceable.

Long-term benefits?

AI technologies are bringing significant changes that will affect the future of the UK workforce and job market. This, in turn, necessitates changes in education, skills and training at all levels, including primary schools. So there are two big reasons why these digital assessment tools have a key role to play.

Firstly, subject-specific knowledge and routine cognitive skills are the easiest things to automate in the workplace through Artificial Intelligence technology. So these alone will be of little benefit to learners when they get to the modern workplace.

We must, therefore, move towards an assessment system that tells us about our children’s progress in mastery of skills such as critical thinking, collaborative problem-solving and creativity, as well as their mastery of subject knowledge.

Using AI in education enables us to broaden the range of abilities we measure. This means we can ensure children are developing the knowledge and skills that will help them prosper.

Symbiotic relationship

Secondly, in order to benefit from the potential future AI-enhanced workplace, we need to educate people to work effectively with these systems.

Everyone will have to understand enough about them to be able to work effectively, and so AI and HI (Human Intelligence) augment each other, and we benefit from a symbiotic relationship between the two.

For example, people need to know what they can expect from an AI system, and what information they need to provide to it so that it will produce the desired outputs.

Children who use these assessment systems from an early stage in their education will develop invaluable skills that will give them a better chance to prosper professionally later in life.

In many ways, assessment is the holy grail of education. The system currently in operation is the lynchpin for much school planning. It’s also the cause of angst for many educators and policy makers.

Students, schools and whole countries are judged on their success according to whatever assessment system is in place at the time of judgement.

The use of AI for this process opens up the possibility to transform education by enabling the progress of a single child, a whole school and or the entire country to be evaluated continuously, with much greater accuracy and far less anxiety.

Does digital assessment raise any moral quandaries?

There is a significant ethical question that results as a consequence of AI assessment. For example, we know that the sharing of data is essential to the adoption of such systems. The sharing of this anonymous information has the potential to move the field forward.

However, this type of sharing introduces a host of problems and questions. This is from individual privacy to a general lack of AI literacy. Initially it may also bring significant training issues for staff.

But once addressed, this could provide teachers with the ability to adopt AI technologies across the curriculum.

Case study: Using AI tech to teach DT at Seven Kings School

Gurpal Thiara, learning leader of design technology at Seven Kings School, explains how a virtual duck has improved the teaching in his department…

Design and Technology by nature is a subject that needs a reflective approach. It requires opportunities to broaden thinking by analysing what you have done well and how to improve. It consistently evolves.

We have always been interested in cutting-edge technology and new strategies that will enhance and improve the learning experience for our D&T students.

So, when we were approached about a government-backed trial to realise the benefits of artificial intelligence in the classroom, we were keen to take part.

The technology, FormativeAssess, is a web application that uses machine learning to provide live feedback to students, in the form of an avatar. In our case, it was a duck.

It began with a meeting with tech company Digital Assess, Goldsmiths, University of London, and the academics behind the technology.

We discussed the questions that the technology would ask the pupils, and how we would implement it. FormativeAssess was then set up on our existing computer hardware. From there it was straightforward to access over the internet.

Immediate positive response

It had an immediate positive response from the students across different year groups. They are so tech-savvy that it was instinctive for them to understand and take to it immediately.

The open questions it asked them as they engaged with it made them think of the brief in a broader context. By challenging their perception of what the problem was, they thought harder about the solutions.

“We hear a lot of talk about robots replacing jobs, but this example of AI in the classroom achieves the opposite”

Another observation was that it helped the students become more independent, as they realised that they held the answers themselves.

The technology helped to shake up their thought processes, but the ideas actually came from them. The psychology behind the questions meant that students were focused, rather than just opting to stay in their comfort zone. They were more adventurous and trusted themselves to come up with the right answer.

This type of independence is so important because of the way that the current education system focuses on academia – only caring about the outcome, not the journey.

It stifles creativity rather than empowering it. Most “education technology” that we’ve seen over the years only enables to regurgitate knowledge.

Teachers need to become a beacon for the empowerment of students by taking up these tools and pushing for change.

More time to teach

Time is so precious in the classroom, but teachers need to have discussions to inspire and challenge. This is especially true at the start of an open-briefed project. Here, the kind of questions asked are designed to give a much broader perspective.

However, it can also be just as important in the middle or end. One of the things the avatar asked was “Where are you in the project?”

It then differentiated the follow-up questions based on the answers it received. From a teacher’s perspective, the technology gave me more time to spend with pupils that really needed it.

Scaling good practice

We hear a lot of talk about robots replacing jobs, but this example of AI in education achieves the opposite. It’s a tool to help teachers scale good practice, and if used correctly will make the teacher a better practitioner.

We should be embracing technology that aids us to enhance the learning process, rather than fearing it.

The overall outcome was that the AI changed the thinking of the students. Most of those taking part produced work that was more creative, explorative and experimental.

The students were empowered through FormativeAssess and the focus on the process, rather than a grade at the end. Attitudes changed, and they believed that they could succeed. Breaking through that psychological barrier really opened up the learning.

If this type of AI technology could be integrated into classrooms on a wider scale, I believe it would have a massive impact. Students and schools would really benefit, especially for project-based tasks.

Ultimately, if it adds to the whole student learning experience, then why not?

5 things the trial taught us about AI in education

It encourages independence

In a subject like DT, which places huge importance on continuous improvement, it’s key that students are encouraged to think imaginatively and autonomously. The technology doesn’t give them the answers, but helps them develop a solution themselves.

Creative questioning is key

The key to success lies in the open questioning the machine learning uses. My suggestion would be to make the “what stage are you at in your project?” question more focused, to challenge pupils right at the start so they can begin to think more creatively. For example, the question that asks pupils to “imagine your product was made out of custard”, empowers them to think completely differently – more laterally – throughout the task.

There’s nothing to fear

Teachers don’t need to be afraid of the onset of this new technology. It cannot replace teaching, but if used properly it can be a useful resource. The students are already so technologically savvy that they can pick it up and run with it. We should be embracing the benefits rather than avoiding it.

It’s not complicated

This technology is easy to implement provided the school already has the IT infrastructure. At Seven Kings School, we have laptop trolleys and internet access. This meant it was very straightforward for the pupils to log on and access the programme online. Apart from a few minor issues at the start of turning on the machine learning, which were quickly resolved by the company, the trial ran smoothly.

It frees teachers to teach

Artificial intelligence can lead to a valuable increase in differentiation time for teachers. Whilst the technology gives each student a form of tutoring, helping them to generate solutions to the tasks given them, teachers can focus on the pupils who are struggling or need extra assistance face-to-face.

AI at Bett

Visit the Bett Show to see real-life examples of educators using AI in the classroom. Hear how it can support teaching, learning engagement and assessment.

Take a look at our other edtech posts on smart boards and management information systems.